Most Viewed Articles

- Blogs >

MNIST-Dataset-Digit-Recognizer

MNIST-Dataset-Digit-Recognizer ¶

Dataset Digit Recognizer MNIST(Modified National Institute of Standards and Technology dataset) ¶

This classic Dataset of handwritten images has served as the basis for benchmarking classification algorithms. As new machine learning techniques emerge, MNIST remains a reliable resource for researchers and learners alike.

We will use Keras with TensorFlow as the main package to create a simple neural network to predict, as accurately as we can, digits from handwritten images. Also, we will be experimenting with various optimizers: the plain vanilla Stochastic Gradient Descent optimizer and the Adam optimizer. However, there are many other parameters, such as training epochs which will we will not be experimenting with. Lastly, we introduce dropout, a form of regularisation, in our neural networks to prevent overfitting.

!pip3 install tensorflow

Defaulting to user installation because normal site-packages is not writeable Requirement already satisfied: tensorflow in ./.local/lib/python3.7/site-packages (2.4.1) Requirement already satisfied: google-pasta~=0.2 in ./.local/lib/python3.7/site-packages (from tensorflow) (0.2.0) Requirement already satisfied: six~=1.15.0 in ./.local/lib/python3.7/site-packages (from tensorflow) (1.15.0) Requirement already satisfied: absl-py~=0.10 in ./.local/lib/python3.7/site-packages (from tensorflow) (0.12.0) Requirement already satisfied: flatbuffers~=1.12.0 in ./.local/lib/python3.7/site-packages (from tensorflow) (1.12) Requirement already satisfied: tensorboard~=2.4 in ./.local/lib/python3.7/site-packages (from tensorflow) (2.4.1) Requirement already satisfied: grpcio~=1.32.0 in ./.local/lib/python3.7/site-packages (from tensorflow) (1.32.0) Requirement already satisfied: opt-einsum~=3.3.0 in ./.local/lib/python3.7/site-packages (from tensorflow) (3.3.0) Requirement already satisfied: wheel~=0.35 in ./.local/lib/python3.7/site-packages (from tensorflow) (0.36.2) Requirement already satisfied: h5py~=2.10.0 in ./.local/lib/python3.7/site-packages (from tensorflow) (2.10.0) Requirement already satisfied: termcolor~=1.1.0 in ./.local/lib/python3.7/site-packages (from tensorflow) (1.1.0) Requirement already satisfied: gast==0.3.3 in ./.local/lib/python3.7/site-packages (from tensorflow) (0.3.3) Requirement already satisfied: wrapt~=1.12.1 in /usr/local/lib/python3.7/dist-packages (from tensorflow) (1.12.1) Requirement already satisfied: keras-preprocessing~=1.1.2 in ./.local/lib/python3.7/site-packages (from tensorflow) (1.1.2) Requirement already satisfied: protobuf>=3.9.2 in ./.local/lib/python3.7/site-packages (from tensorflow) (3.15.6) Requirement already satisfied: typing-extensions~=3.7.4 in /usr/local/lib/python3.7/dist-packages (from tensorflow) (3.7.4.3) Requirement already satisfied: astunparse~=1.6.3 in ./.local/lib/python3.7/site-packages (from tensorflow) (1.6.3) Requirement already satisfied: numpy~=1.19.2 in /usr/local/lib/python3.7/dist-packages (from tensorflow) (1.19.4) Requirement already satisfied: tensorflow-estimator<2.5.0,>=2.4.0 in ./.local/lib/python3.7/site-packages (from tensorflow) (2.4.0) Requirement already satisfied: markdown>=2.6.8 in ./.local/lib/python3.7/site-packages (from tensorboard~=2.4->tensorflow) (3.3.4) Requirement already satisfied: google-auth-oauthlib<0.5,>=0.4.1 in ./.local/lib/python3.7/site-packages (from tensorboard~=2.4->tensorflow) (0.4.3) Requirement already satisfied: setuptools>=41.0.0 in ./.local/lib/python3.7/site-packages (from tensorboard~=2.4->tensorflow) (54.1.2) Requirement already satisfied: requests<3,>=2.21.0 in ./.local/lib/python3.7/site-packages (from tensorboard~=2.4->tensorflow) (2.25.1) Requirement already satisfied: werkzeug>=0.11.15 in ./.local/lib/python3.7/site-packages (from tensorboard~=2.4->tensorflow) (1.0.1) Requirement already satisfied: google-auth<2,>=1.6.3 in ./.local/lib/python3.7/site-packages (from tensorboard~=2.4->tensorflow) (1.28.0) Requirement already satisfied: tensorboard-plugin-wit>=1.6.0 in ./.local/lib/python3.7/site-packages (from tensorboard~=2.4->tensorflow) (1.8.0) Requirement already satisfied: cachetools<5.0,>=2.0.0 in ./.local/lib/python3.7/site-packages (from google-auth<2,>=1.6.3->tensorboard~=2.4->tensorflow) (4.2.1) Requirement already satisfied: pyasn1-modules>=0.2.1 in ./.local/lib/python3.7/site-packages (from google-auth<2,>=1.6.3->tensorboard~=2.4->tensorflow) (0.2.8) Requirement already satisfied: rsa<5,>=3.1.4 in /usr/lib/python3/dist-packages (from google-auth<2,>=1.6.3->tensorboard~=2.4->tensorflow) (3.2.3) Requirement already satisfied: requests-oauthlib>=0.7.0 in /usr/local/lib/python3.7/dist-packages (from google-auth-oauthlib<0.5,>=0.4.1->tensorboard~=2.4->tensorflow) (1.3.0) Requirement already satisfied: importlib-metadata in /usr/local/lib/python3.7/dist-packages (from markdown>=2.6.8->tensorboard~=2.4->tensorflow) (3.3.0) Requirement already satisfied: pyasn1<0.5.0,>=0.4.6 in ./.local/lib/python3.7/site-packages (from pyasn1-modules>=0.2.1->google-auth<2,>=1.6.3->tensorboard~=2.4->tensorflow) (0.4.8) Requirement already satisfied: idna<3,>=2.5 in ./.local/lib/python3.7/site-packages (from requests<3,>=2.21.0->tensorboard~=2.4->tensorflow) (2.10) Requirement already satisfied: urllib3<1.27,>=1.21.1 in ./.local/lib/python3.7/site-packages (from requests<3,>=2.21.0->tensorboard~=2.4->tensorflow) (1.26.2) Requirement already satisfied: certifi>=2017.4.17 in ./.local/lib/python3.7/site-packages (from requests<3,>=2.21.0->tensorboard~=2.4->tensorflow) (2020.12.5) Requirement already satisfied: chardet<5,>=3.0.2 in ./.local/lib/python3.7/site-packages (from requests<3,>=2.21.0->tensorboard~=2.4->tensorflow) (4.0.0) Requirement already satisfied: oauthlib>=3.0.0 in /usr/local/lib/python3.7/dist-packages (from requests-oauthlib>=0.7.0->google-auth-oauthlib<0.5,>=0.4.1->tensorboard~=2.4->tensorflow) (3.1.0) Requirement already satisfied: zipp>=0.5 in /usr/local/lib/python3.7/dist-packages (from importlib-metadata->markdown>=2.6.8->tensorboard~=2.4->tensorflow) (3.4.0)

The data set contains 60,000 traning images and 10000 testing images. Here I split the data into training and testing datasets respectively. The x_train & x_test contains grayscale codes while y_test & y_train contains labels from 0–9 which represents the numbers.

import tensorflow as tf #MNIST ("Modified National Institute of Standards and Technology") mnist = tf.keras.datasets.mnist (x_train, y_train),(x_test, y_test) = mnist.load_data()

import matplotlib.pyplot as plt print(x_train[0])

[[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 3 18 18 18 126 136

175 26 166 255 247 127 0 0 0 0]

[ 0 0 0 0 0 0 0 0 30 36 94 154 170 253 253 253 253 253

225 172 253 242 195 64 0 0 0 0]

[ 0 0 0 0 0 0 0 49 238 253 253 253 253 253 253 253 253 251

93 82 82 56 39 0 0 0 0 0]

[ 0 0 0 0 0 0 0 18 219 253 253 253 253 253 198 182 247 241

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 80 156 107 253 253 205 11 0 43 154

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 14 1 154 253 90 0 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 139 253 190 2 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 11 190 253 70 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 35 241 225 160 108 1

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 81 240 253 253 119

25 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 45 186 253 253

150 27 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 16 93 252

253 187 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 249

253 249 64 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 46 130 183 253

253 207 2 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 39 148 229 253 253 253

250 182 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 24 114 221 253 253 253 253 201

78 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 23 66 213 253 253 253 253 198 81 2

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 18 171 219 253 253 253 253 195 80 9 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 55 172 226 253 253 253 253 244 133 11 0 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 136 253 253 253 212 135 132 16 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0]]

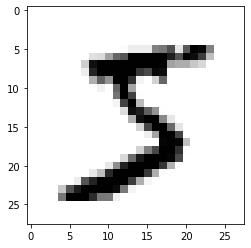

#SHOW BINARY COLOUR AMP THAT IS GREY SCALE IMAGE plt.imshow(x_train[0],cmap=plt.cm.binary)

<matplotlib.image.AxesImage at 0x7fc8252e7950>

x_train = tf.keras.utils.normalize(x_train, axis=1) x_test = tf.keras.utils.normalize(x_test, axis=1)

check the shape of the dataset to see if it is compatible to use in for CNN. here (60000,28,28) is our result which means that we have 60000 images in our dataset and size of each image is 28 * 28 pixel.

print(x_train[0].shape)

(28, 28)

Building the Model: ¶

Use Keras API to build the model. here i import the Sequential Model from Keras and add Conv2D, MaxPooling, Flatten, Dropout, and Dense layers. Dropout layers fight with the overfitting by disregarding some of the neurons while training while Flatten layers flatten 2D arrays to 1D array before building the fully connected layers.

#sequential model model = tf.keras.models.Sequential()

model.add(tf.keras.layers.Flatten()) model.add(tf.keras.layers.Dense(128, activation=tf.nn.relu)) model.add(tf.keras.layers.Dense(128, activation=tf.nn.relu)) model.add(tf.keras.layers.Dense(10, activation=tf.nn.softmax))

Compiling and fitting the Model: ¶

So far, we have created an non-optimized empty CNN. Then I set an optimizer with a given loss function which uses a metric and fit the model by using our train data. The ADAM optimizer is said to outperform the other optimizers, that’s why I used that.

model.compile(optimizer='adam',loss='sparse_categorical_crossentropy',metrics=['accuracy'])

model.fit(x_train, y_train, epochs= 10)

Epoch 1/10 1875/1875 [==============================] - 4s 2ms/step - loss: 0.4850 - accuracy: 0.8645 Epoch 2/10 1875/1875 [==============================] - 5s 2ms/step - loss: 0.1146 - accuracy: 0.9667 Epoch 3/10 1875/1875 [==============================] - 4s 2ms/step - loss: 0.0697 - accuracy: 0.9773 Epoch 4/10 1875/1875 [==============================] - 4s 2ms/step - loss: 0.0514 - accuracy: 0.9831 Epoch 5/10 1875/1875 [==============================] - 4s 2ms/step - loss: 0.0402 - accuracy: 0.9869 Epoch 6/10 1875/1875 [==============================] - 4s 2ms/step - loss: 0.0290 - accuracy: 0.9906 Epoch 7/10 1875/1875 [==============================] - 5s 2ms/step - loss: 0.0223 - accuracy: 0.9929 Epoch 8/10 1875/1875 [==============================] - 4s 2ms/step - loss: 0.0192 - accuracy: 0.9930 Epoch 9/10 1875/1875 [==============================] - 4s 2ms/step - loss: 0.0157 - accuracy: 0.9947 Epoch 10/10 1875/1875 [==============================] - 5s 3ms/step - loss: 0.0119 - accuracy: 0.9959

<tensorflow.python.keras.callbacks.History at 0x7fc826bd4a90>

val_loss, val_acc = model.evaluate(x_test, y_test)

313/313 [==============================] - 1s 2ms/step - loss: 0.1124 - accuracy: 0.9728

Model Evaluation: ¶

When this model is evaluated we see that just 10 epochs gave use the accuracy of 98.59% at a very low loss.

print(val_loss) print(val_acc)

0.11244665831327438 0.9728000164031982

model.save('mnist_digit.model')

INFO:tensorflow:Assets written to: mnist_digit.model/assets

predictions = model.predict(x_test) print(predictions)

[[3.22702544e-14 3.51989420e-14 5.57353896e-09 ... 1.00000000e+00 1.60817892e-13 2.40715542e-13] [8.56872416e-19 1.27712987e-08 1.00000000e+00 ... 2.81515991e-11 1.06037565e-17 1.32803862e-22] [3.79793225e-10 9.99989152e-01 5.12245833e-07 ... 1.00320940e-05 2.02710496e-07 4.03521799e-11] ... [8.25261289e-17 3.40463356e-12 2.62771077e-17 ... 1.34488722e-08 1.85024285e-14 1.18482317e-08] [1.26037147e-12 6.74442978e-13 1.62169692e-14 ... 3.93779592e-10 2.12413988e-06 7.47177758e-15] [1.57967750e-10 3.43449249e-12 8.06131203e-11 ... 2.16600704e-13 3.22796434e-12 2.31840290e-13]]

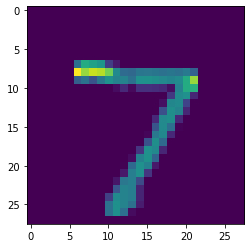

import numpy as np print(np.argmax(predictions[0]))

7

plt.imshow(x_test[0])

<matplotlib.image.AxesImage at 0x7fc2ec6824d0>

model.evaluate(x_test, y_test)

313/313 [==============================] - 1s 2ms/step - loss: 0.1124 - accuracy: 0.9728

[0.11244665831327438, 0.9728000164031982]

Here we select an image and run it through to get the prediction then display both the image and prediction to see if its accurate.

image_index = 285 plt.imshow(x_test[image_index].reshape(28, 28),cmap='Greys') predict = x_test[image_index].reshape(28,28) pred = model.predict(x_test[image_index].reshape(1, 28, 28, 1)) print(pred.argmax())

2

image_index = 6000 plt.imshow(x_test[image_index].reshape(28, 28),cmap='Greys') predict = x_test[image_index].reshape(28,28) pred = model.predict(x_test[image_index].reshape(1, 28, 28, 1)) print(pred.argmax())

9

Popular Searches

- Thesis Services

- Thesis Writers Near me

- Ph.D Thesis Help

- M.Tech Thesis Help

- Thesis Assistance Online

- Thesis Help Chandigarh

- Thesis Writing Services

- Thesis Service Online

- Thesis Topics in Computer Science

- Online Thesis Writing Services

- Ph.D Research Topics in AI

- Thesis Guidance and Counselling

- Research Paper Writing Services

- Thesis Topics in Computer Science

- Brain Tumor Detection

- Brain Tumor Detection in Matlab

- Markov Chain

- Object Detection

- Employee Attrition Prediction

- Handwritten Character Recognition

- Gradient Descent with Nesterov Momentum

- Gender Age Detection with OpenCV

- Realtime Eye Blink Detection

- Pencil Sketch of a Photo

- Realtime Facial Expression Recognition

- Time Series Forecasting

- Face Comparison

- Credit Card Fraud Detection

- House Price Prediction

- House Budget Prediction

- Stock Prediction

- Email Spam Detection