Most Viewed Articles

- Blogs >

K Nearest Neighbors - Classification

Guide for kubeflow installation and pipeline for GKE cluster. ¶

Kubeflow is a Machine Learning toolkit for Kubernetes. ¶

Kubeflow Installation Guide will introduce you to the Kubeflow Piplines user interface (UI) and get a simple pipeline running quickly.

A Machine Learning workflow can involve many steps with dependencies on each other, from data preparation and analysis, to training, to evaluation, to deployment, and more. It's hard to compose and track these processes in an ad-hoc manner—for example, in a set of notebooks or scripts—and things like auditing and reproducibility become increasingly problematic.Kubeflow Pipelines (KFP) helps solve these issues by providing a way to deploy robust, repeatable Machine Learning pipelines along with monitoring, auditing, version tracking, and reproducibility. Cloud AI Pipelines makes it easy to set up a KFP installation.

You will Learn - How to install Kubeflow Pipelines on a GKE cluster

What you'll need ¶

- A basic understanding of Kubernetes will be helpful but not necessary

- An active GCP project for which you have Owner permissions

- (Optional) A GitHub account

- Access to the Google Cloud Shell, available in the Google Cloud Platform (GCP) Console

Visit the GCP Console in the browser and log in with your project credentials : ¶

Then click the "Activate Cloud Shell" icon in the top right of the console to start up a Cloud Shell.

When you start up the Cloud Shell, it will tell you the name of the project it's set to use. Check that this setting is correct.

To find your project ID, visit the GCP Console's Home panel. If the screen is empty, click on ‘Yes' at the prompt to create a dashboard.

Then, in the Cloud Shell terminal, run these commands if necessary to configure gcloud to use the correct project:

export PROJECT_ID = your_project_id

gcloud config set project ${PROJECT_ID}

Create a storage bucket ¶

Note : Bucket names must be unique across all of GCP, not just your organization ¶

Create a Cloud Storage bucket for storing pipeline files. You'll need to use a globally unique ID, so it is convenient to define a bucket name that includes your project ID. Create the bucket using the gsutil mb (make bucket) command:

export PROJECT_ID = your_project_id

export BUCKET_NAME=kubeflow-${PROJECT_ID}

gsutil mb gs://${BUCKET_NAME}

Create an AI Platform Pipelines (Hosted Kubeflow Pipelines) installation ¶

Before you begin, check that your Google Cloud project is correctly set up and that you have sufficient permissions to deploy AI Platform Pipelines. And make sure that billing is enabled for your Cloud project.

Use the following instructions to check if you have been granted the roles required to deploy AI Platform Pipelines.

- Open a Cloud Shell session. Cloud Shell opens in a frame at the bottom of the Google Cloud Console.

- You must have the Viewer (roles/viewer) and Kubernetes Engine Admin (roles/container.admin) roles on the project, or other roles that include the same permissions such as the Owner (roles/owner) role on the project, to deploy AI Platform Pipelines. Run the following command in Cloud Shell to list the members of the Viewer and Kubernetes Engine Admin roles.

gcloud projects get-iam-policy PROJECT_ID \ --flatten="bindings[].members" --format="table(bindings.role, bindings.members)" \ --filter="bindings.role:roles/container.admin OR bindings.role:roles/viewer"

Replace PROJECT_ID with the ID of your Google Cloud project.

Setting up your AI platform pipelines instance.¶

Use the following instructions to set up AI Platform Pipelines on a new GKE cluster.

Open AI Platform Pipelines in the Google Cloud Console.

Go to AI Platform Pipelines

Click Select project. A dialog prompting you to select a Google Cloud project appears.

Select the Google Cloud project you want to use for this quickstart, then click Open.

In the AI Platform Pipelines toolbar, click New instance. Kubeflow Pipelines opens in Google Cloud Marketplace.

Click Configure. A form opens for you to configure your Kubeflow Pipelines deployment.

If the Create a new cluster link is displayed, click Create a new cluster. Otherwise, continue to the next step.

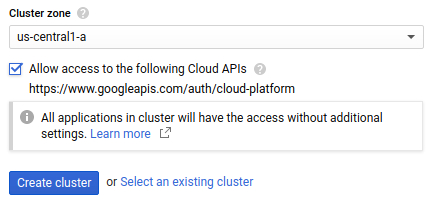

Select us-central1-a as the Cluster zone where your GKE cluster should be created.

Check Allow access to the following Cloud APIs to grant applications that run on your GKE cluster access to Google Cloud resources. By checking this box, you are granting your cluster access to the https://www.googleapis.com/auth/cloud-platform access scope. This access scope provides full access to the Google Cloud resources that you have enabled in your project. Granting your cluster access to Google Cloud resources in this manner saves you the effort of creating a Kubernetes secret.

Click Create cluster to create your GKE cluster. This process takes several minutes to complete.

After your cluster has been created, supply the following information:

- Namespace: Select default as the namespace.

- App instance name: Enter pipelines-quickstart as the instance name.

Click Deploy to deploy Kubeflow Pipelines onto your new GKE cluster.

The deployment process takes several minutes to complete. After the deployment process is finished, continue to the next section.

Find the AI Platform Pipelines cluster named pipelines-quickstart, then click Open pipelines dashboard to open Kubeflow Pipelines. The Kubeflow Pipelines dashboard opens, displaying the Getting Started page. ¶

Here is the Kubeflow pipeline Dashboard below : ¶

With the help of Kubeflow Installation Guide are able to install kubeflow pipeline on Google kubernetes engine cluster.

Popular Searches

- Thesis Services

- Thesis Writers Near me

- Ph.D Thesis Help

- M.Tech Thesis Help

- Thesis Assistance Online

- Thesis Help Chandigarh

- Thesis Writing Services

- Thesis Service Online

- Thesis Topics in Computer Science

- Online Thesis Writing Services

- Ph.D Research Topics in AI

- Thesis Guidance and Counselling

- Research Paper Writing Services

- Thesis Topics in Computer Science

- Brain Tumor Detection

- Brain Tumor Detection in Matlab

- Markov Chain

- Object Detection

- Employee Attrition Prediction

- Handwritten Character Recognition

- Gradient Descent with Nesterov Momentum

- Gender Age Detection with OpenCV

- Realtime Eye Blink Detection

- Pencil Sketch of a Photo

- Realtime Facial Expression Recognition

- Time Series Forecasting

- Face Comparison

- Credit Card Fraud Detection

- House Price Prediction

- House Budget Prediction

- Stock Prediction

- Email Spam Detection